Abstract

While chain-of-thought (CoT) reasoning has substantially improved multimodal large language models (MLLMs) on complex reasoning tasks, existing approaches largely rely on long textual reasoning trajectories and provide limited mechanisms for learning stable visual attention policies. Our analysis shows that current MLLMs exhibit weak visual focus: early-stage visual misalignment is rarely corrected during subsequent reasoning, leading to error propagation and failed inferences. We argue that this limitation stems from inadequate credit assignment for visual attention during training. To address this issue, we propose SAYO, a visual reasoning model trained with a reinforcement learning (RL) framework that introduces a region-level visual attention-based reward. This reward explicitly aligns optimization signals with visually grounded reasoning steps, enabling the model to learn more reliable attention behaviors. Extensive experiments across multiple multimodal benchmarks demonstrate that SAYO consistently improves performance on diverse reasoning and perception tasks.

More Example

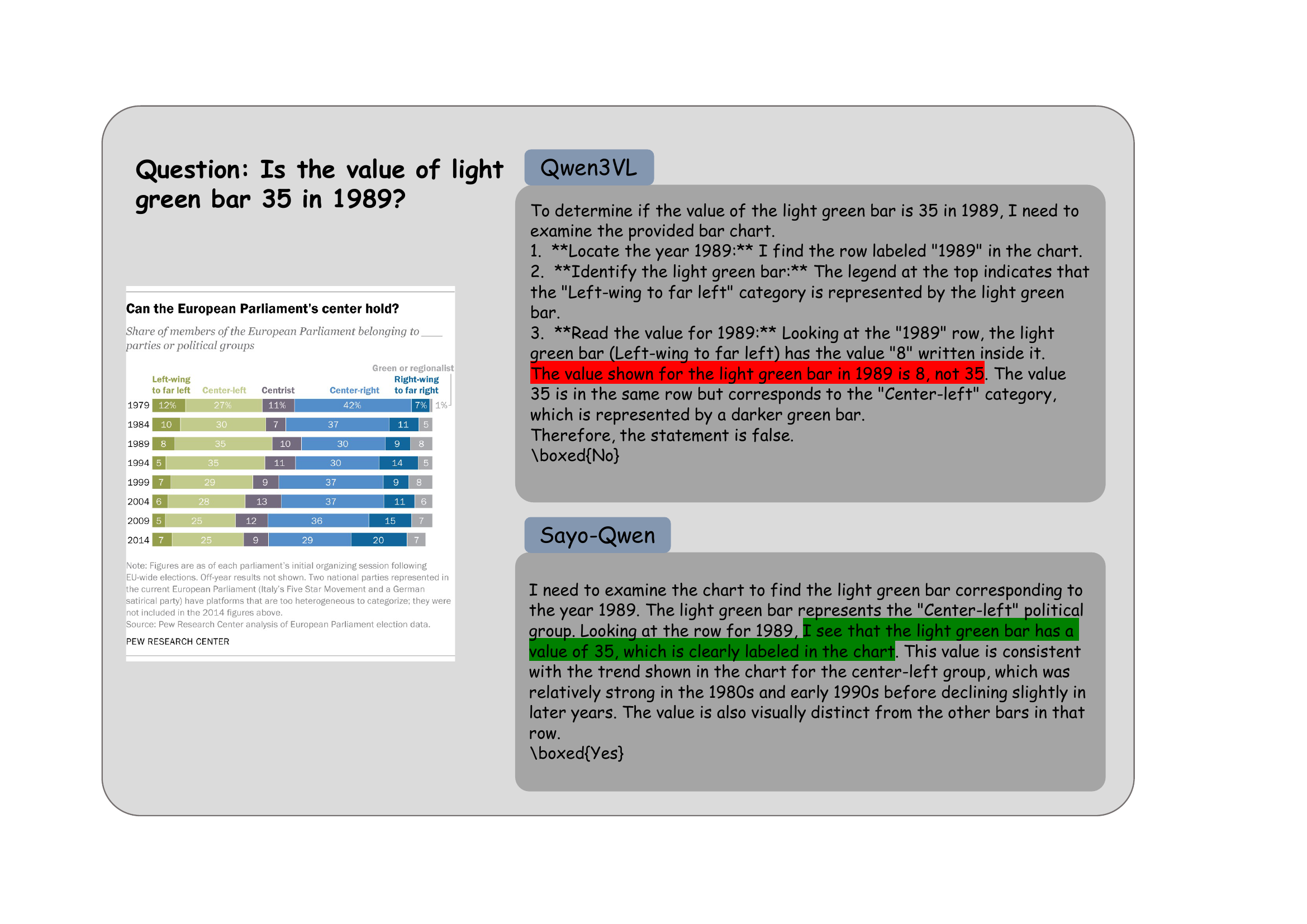

A case in ChartQA.

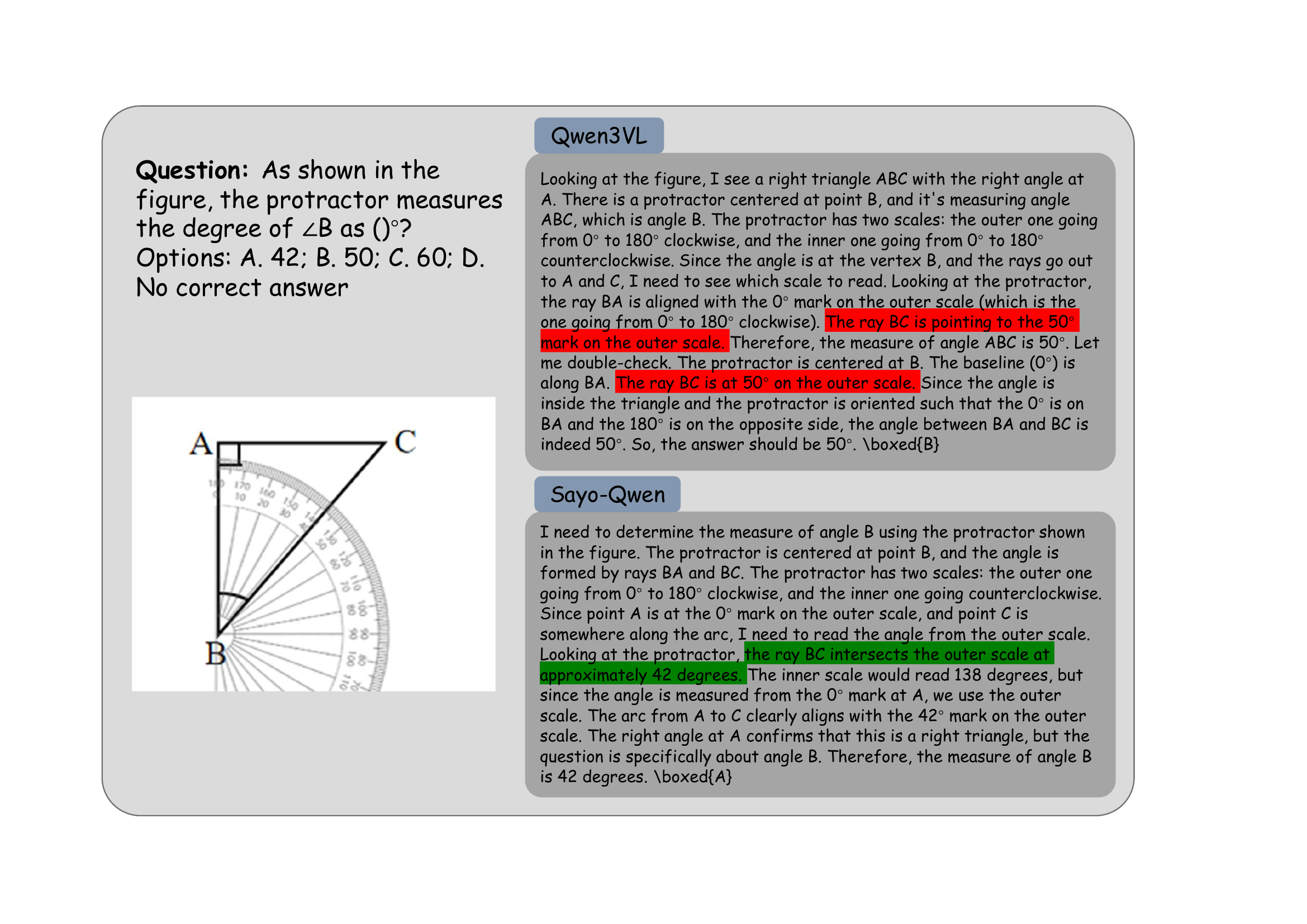

A case in We-Math.

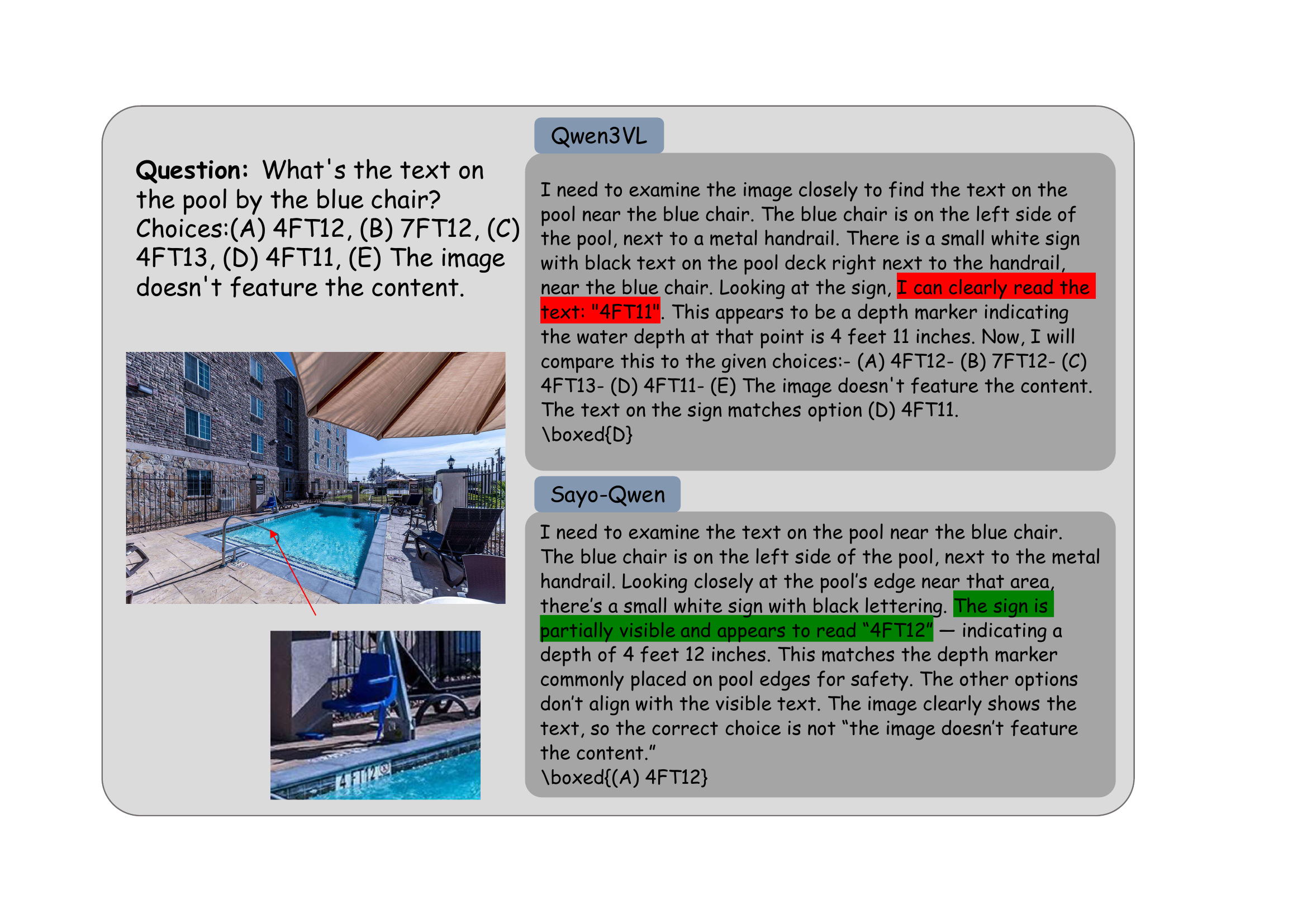

A case in MME-Realworld.

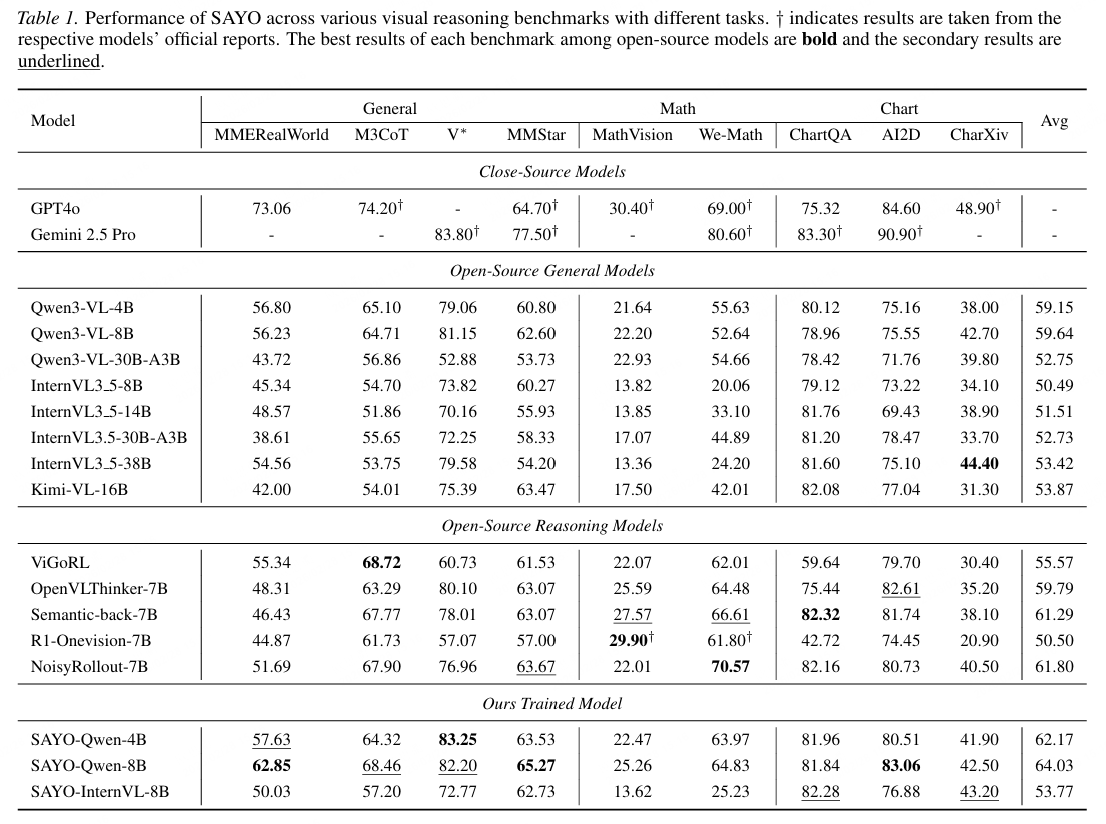

Results

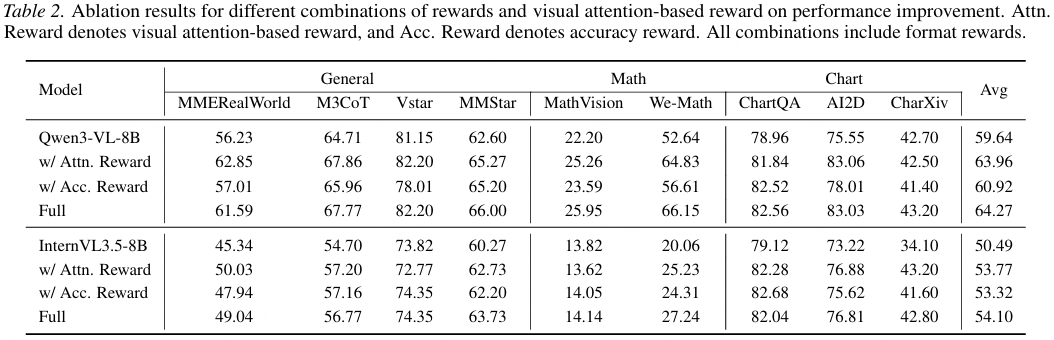

Sayo has better performance in various of understanding and reasoning tasks without refocus or visual prompts. Besides, models trained using only the visual attention reward achieve performance comparable to those trained with the combined reward, whereas models trained with accuracy rewards alone exhibit only marginal gains. These findings suggest that deficiencies in current MLLMs stem less from limited reasoning capacity and more from insufficient visual perception and localization.

BibTeX

@article{domllmsreallyseeit,

title={Do MLLMs Really See It: Reinforcing Visual Attention in Multimodal LLMs},

author={Siqu Ou and Tianrui Wan and Zhiyuan Zhao and Junyu Gao and Xuelong Li},

year={2026},

eprint={2602.08241},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2602.08241},

}